AI x Healthcare: 3 Impending Singularities

Points beyond which healthcare will change so profoundly as a result of advances in AI that we'll barely recognize it any more

Just as I started my new role at Endless, ChatGPT’s launch in November 2022 coincidentally marked the beginning of a frenzied new era of AI. In the first few days of experimenting with this exciting new toy, among other things I asked it personal health questions, such as explaining lab test reports and advising on an inherited genetic ailment. Although not a health-specific model, I was blown away by how comprehensive and accurate the suggestions were, better than most physicians I had consulted. Moreover, it was infinitely more patient and empathetic in its responses to my never-ending questions. It even generated a comprehensive clinical note and an electronic health record in the widely adopted FHIR format when I asked it to, without as much as a frown or sigh! It didn’t take me long to realize we were on the verge of a revolution unlike anything we have ever seen in global health.

Over the past 18 months, I have as a result been obsessed with AI developments, especially the increasingly capable and sophisticated GenAI models targeted at medical use cases. I spent late nights and weekends reading newsletters and arxiv papers to keep up with the rapidly advancing technology and the flood of emerging research. Here are a few curated highlights:

AI Passing medical licensing exams: ChatGPT and other AIs passed the US Medical Licensing Exam with flying colors, and soon after, they excelled in exams in Japan, Germany, and Israel. The rate of improvement was also notable - while GPT-3.5 was scoring below passing marks, GPT-4, released only a few months later, often scored in the 90th+ percentile of medical students.

AI better than human doctors in diagnostic conversations and even empathy: Paper (later published in Nature [paywalled]) showing that a conversational AI outperformed human doctors in a blinded trial on 26 of 28 key dimensions of care (which, mindblowingly, includes empathy)

Generalist foundation models with some creative prompting techniques outperforming specialized medically “fine-tuned” models (“Medprompt” Paper by Microsoft), possibly indicating [my opinion here] that cross-disciplinary expertise somehow provides an added benefit to medical reasoning.

GPT-4o gaining the ability to comprehend top 50 languages and speak in natural sounding voice across those languages. Also, Swahili model fine-tuned in Kenya, and African startup fine-tuning voice recognition for 200 African accents of English.

Nvidia and Hippocratic AI joined forces to train AI Nurses that can converse on video and only cost $9 an hour.

Multimodal models able to analyze medical images: GPT-4 (not the latest Omni model) performed at a post-grad year-2 student level on radiology exam questions, so fine-tuned multimodal models could realistically achieve at-par performance with human radiologists in the near future.

AI can even outperform human experts on diagnosing the long-tail of uncommon diseases, even without expressly human-labeled datasets (humans under-index rare disease probabilities, whereas AIs are more objective. AIs also “pick up subtle patterns humans miss”)

GPT-4 approaches expert level performance on ophthalmology exam questions, and significantly outperforms trainees and junior doctors (paper)

Early indications that hallucinations are greatly reduced in larger context windows, with RAG (i.e. searching a knowledge base) and other techniques. This has been the single biggest concern about using GenAI in medical use cases.

AI has superhuman persuasive abilities, which may be relevant in nudging people towards healthy behavior change to manage chronic diseases.

As I kept up with these developments, I thought a lot about the upcoming disruption to healthcare as a result of generative AI. Most conversations I heard were about how AI would fit within existing health systems at the margins (e.g. transcribing medical conversations, improving clinical decision support, etc.). But I felt that the vast majority of health experts were missing the big picture and not analyzing the trends and their potential impact from first principles. That’s what I will attempt to do today.

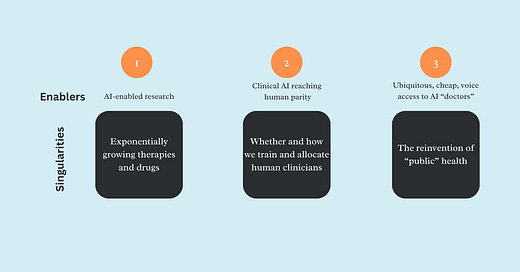

After I read a recent article by Ethan Mollick (one of the most influential applied AI researchers now, founder of the Wharton GenAI Lab and author of the One Useful Thing newsletter which I highly recommend), I realized that my thoughts on this could be framed best by the concept of a “Singularity” — i.e. points at which changes become so profound and radical that is is hard to predict the future beyond them. Although the timeline is still a guess right now, I’m quite convinced these singularities will relatively soon transform how we think of, design and experience healthcare.

At least the first singularity I speak about is being widely discussed already, and I will just summarize it, but I think the ones that follow are logical consequences of the first. And #3 on the list, which I have not heard anyone talk about, might be the most consequential of all.

Singularity #1: Explosion of clinical therapies and novel drugs

We went from pre-schooler level AI (GPT-2) to advanced high-schooler level AI (GPT-4) within four years. With current exponential growth and significant investments in compute, the next leap to PhD-level capabilities or beyond should occur in roughly two years.

Combine this with the revolution in protein mapping (thanks to AlphaFold compressing a billion years of research into months), CRISPR-based gene therapies, and in-silico drug development —with each of these intersecting and compounding on each other—and we’re heading for an unprecedented acceleration in clinical research and drug development. We’re already seeing the early indications of precision therapies like the ‘miracle drug’ that helps obese patients lose weight, or the drug currently in Phase 1 trials that enables adults to regrow their teeth. In the not-too-distant future, expect therapies that cure cancer, prevent/delay onset of inherited/chronic illnesses like diabetes and Alzheimer’s, drugs that slow aging and provide metabolic benefits similar to exercise, and vaccines that prevent deaths from currently fatal diseases like Ebola.

(Please note that I’m intentionally not focusing on intra-country and global equity issues in this post for brevity’s sake. Of course there will be friction with adoption and regulations. Prices of these latest therapies might be more or less expensive than current drugs, depending largely on patent regulations. Existing inequities w.r.t. R&D funding and baseline income may be amplified. Etc. etc.)

Not only will research in labs accelerate, but research will enter the consultation chamber and the lines between practice and research will start to blur. As more and more clinical encounters get digitized and transcribed (again, thanks to LLMs and other natural language processing AIs, soon we will have ambient listening as a way to capture pertinent data to update patients’ medical history from the raw conversations — instead of nurses/doctors having to type into EMRs, which has historically been the greatest barrier to adoption and regular usage), we will have real-time and accurate data from millions of patient encounters that will help validate the outcomes from therapies in real-world settings, and update the algorithms and protocols that determine the optimal therapy needed for a particular patient given their unique health history, ethnicity, age, sex and other information. Digitized clinical encounters will also enable running clinical experiments automatically in a similar way as A/B tests are done in software development to optimize outcomes, although ethical standards and IRB protocols will be crucial to think through for such novel types of research. And when we finally allow AI to drive the protocols guiding the care encounter, any updated protocols could be instantaneously deployed all over the world for better clinical encounters and outcomes (the current time it takes to go from research to practice for a new protocol is ~17 years!).

While it’s currently hard to imagine such an exciting future in low-resource settings like Sub-saharan Africa and South Asia, I think, counter-intuitively, some of these countries might actually leapfrog ahead of many western nations and their fragmented and onerously regulated health systems. Already, initiatives like GIDH by the WHO are showcasing success stories from countries like Bangladesh and India that have made tremendous progress in digitizing their health systems, and providing a roadmap for other low-resource countries to adopt interoperable data standards and digital platforms for every type of care encounter.

Singularity #2: Whether and how we train medical professionals and allocate them in health systems

This rapidly accelerating research will lead us, or at least contribute to, the next singularity. The last studies I know of estimated the rate of doubling of medical knowledge at just 73 days in 2020 (and it has surely shortened further since then). Even very conservatively, what this means is that a medical student entering a 7 year program leading to a medical practice license is experiencing at least 5-6 doublings (a whopping ~50x) of medical knowledge by the time they graduate, which is impossible to reflect in updated curricula. Beyond licensure, no amount of continuing education can help a practicing physician keep up with the flood of new information and protocols, partly contributing to the egregious delay in translating research evidence to practice.

Another dimension of this problem is the hyper-specialization it causes these days in medicine, leading to increasing fragmentation, conflicting advice and physician egos, more drug interactions, and even mistakes in diagnosing conditions accurately. A friend who recently went through a terrifying episode of post-natal complications and surgeries at Johns Hopkins hospital had her gynecologists fail to identify a drug induced fever, which was predicted far ahead by her internal medicine specialist husband. As the subbranches of medicine proliferate and the volume of material in each expand exponentially, this problem will likely get from bad to worse.

Last but not least, the quality of doctors is highly variable, and Johns Hopkins doctors are likely in the top 1% of all doctors worldwide, while the average doctor in a place like Bangladesh likely falls in the bottom two quartiles.

Now contrast this with this the fact that AI is rapidly reaching the level of top doctors, and will soon surpass them, in history taking, clinical conversations and diagnostic capabilities. With increasing context windows, it will be able to take a holistic view of all of a patients’ medical history, and follow the latest protocols and medical evidence accurately. It will even be able to seamlessly traverse multiple specialties relevant to the case at hand, and “consult” with other AI specialists without any ego conflicts or time lags.

Such a super-human clinical agent will be available 24/7, just as much in Botswana (which had <1 oncologist per million population in 2010) as in Boston, with as little as an internet connected device, and at a fraction of the cost of training and paying a human doctor (estimated at $21-48K in sub-Saharan countries). And “they” will never flee the country for better opportunities, and happily serve in their designated rural outposts.

People are rightly worried about AI clinicians replacing human doctors, citing bias and hallucinations as the top two concerns. We must absolutely have the right evaluation frameworks and localization mechanisms to ensure AI performs well in each context. But we must also remember that we are competing against human doctors in real world settings — where poor training and skills, out-of-date and/or forgotten knowledge, short attention spans, implicit bias and stigma, and perverse incentives cause even the best doctors to make mistakes and suggest inaccurate treatments. That is not a very high bar to clear.

I think the biggest challenge will be that even these superhuman AI agents will have to make high-stakes decisions with imperfect information (e.g. a patient who cannot afford a CT-Scan or even travel for an X-ray), and treat with the limited therapies that are often available in resource constrained settings. AI guardrails and benchmarks will have to be nuanced enough to navigate such ambiguous moral and ethical dilemmas.

Even after we figure that out, regulatory and liability considerations might still prevent AI from taking the place of human doctors in the Western world for the foreseeable future, but in countries like Botswana, the economics of it will soon make it unjustifiable to train any more human physicians, at least in its current form. Medical schools might pivot to train many more human “caregivers” (e.g. nurses, paramedics, CHWs) instead, who are trained on the soft skills of taking care of patients while being clinically guided by an AI.

Singularity #3: The transition from “public” to personalized health systems

Even if health systems don’t respond rationally to these developments due to lobbies and regulatory capture, such as doctor’s unions (which I have personally faced in Bangladesh with Jeeon), people will likely make personal choices that could seriously undermine health system function and performance unless they can be fundamentally reimagined.

Let’s break it down a bit. Public health as a discipline serves broadly four key functions:

Managing people’s health needs for common health problems/conditions they face, including infectious and non-communicable diseases, pregnancies, etc. in the absence of clinicians to handle every case individually

Educational, preventive and promotive communications and interventions to prevent risky behaviors and promote healthy ones

Macro scale activities such as immunizations, surveillance of diseases, predicting epidemics, tackling antibiotic resistance, etc.

Addressing non-clinical (social, behavioral, environmental, emergencies, etc.) drivers of health

When AI clinical agents reach human parity, one of the key binding constraints on the first function —clinical expertise— will suddenly be lifted. With tailored medical advice and treatment plans based on the latest clinical knowledge, on-demand 24/7, each person will have access to their own personal AI doctor, massively disrupting Function #1. Around the same time, AI will also be able to converse empathetically in local languages and dialects, which means people will likely prefer the convenience of consulting such an AI at home over making inconvenient, time-consuming, and expensive trips to health facilities. Not only that, the AI will be able to persuade and nudge people towards better health in a personalized way as well, informed by the latest in behavioral sciences (significantly improving on Function #2 and eliminating the need for one-size-fits-all communications campaigns).

(If you’re still not convinced, GPT-4o is already freely available to anyone on the planet. Try downloading the ChatGPT official app on your phone and have a voice conversation with it regarding your most recent health problem, starting by asking it to “Act like a qualified health professional”. Then project the rate of progress forward a few years. You’ll know what I mean.)

Resisting this inevitable shift in user behavior will be counterproductive for health systems, because we will lose out on opportunities to localize and de-bias these systems, ensure proper guardrails, target high-risk patients with health system interventions like a CHW visit, and detect trends and patterns from the day-to-day conversations people have with their AIs. Indeed, the micro data from millions of conversations with AIs can also easily be aggregated up to develop the most high-resolution and real-time disease surveillance system we have ever seen (Function #3). Most importantly, perhaps, such deep integration and fundamental redesign can massively improve access and care experiences for patients, and save vast amounts of health resources while improving outcomes. Conversely, if health systems fail to adapt, they will likely be completely bypassed by people voting with their feet (or rather, fingers).

***

The above is my own (hopefully logical) extrapolation from current trends. However, it is still conjecture, and I have not addressed many pertinent questions. For example:

What will be the new binding constraints after clinical knowledge is democratized? Will it be access to cutting-edge diagnostics, therapies and drugs due to poor infrastructure (cold chains, etc.) and affordability? Will it be the inability of regulatory mechanisms to keep up with the pace of change? What else?

When we don’t have the blessing of ignorance and can pinpoint exactly which hundred million people around the world need an urgent heart surgery or cutting-edge gene therapy (thanks to their AI doctor), but only have the resources and infrastructure to deliver it to a tiny segment of well-off patients, how will we deal with the resulting moral dilemmas?

As AI takes on more diagnostic and treatment roles, how do we address the ethical implications of AI making life-and-death decisions? Who will we hold legally accountable for AI-driven medical errors? How will we choose between conservatism (only allowing AI to practice if it exceeds human accuracy by a large margin, as in the case of self-driving cars), or pragmatism (allowing AI to take the place of vacant doctor positions if it clears the relatively low bar for a medical license)?

I therefore think this topic deserves a wider conversation within this community.

What do you think? Please take 5 minutes to leave a comment or question on this post or join this Chat room. Feel free to also reply to this email newsletter directly to share your reflections with me.

Great article Rubayat. The change to public health is incredible, exciting, scary, and revolutionary. I love the 3 axes you're considering here. Even in the near term, I think the changes you describe are going to compound rapidly. For example, "Thanks to LLMs and other natural language processing AIs, soon we will have ambient listening as a way to capture pertinent data to update patients’ medical history from the raw conversations" might seem like incremental progress to many people. But from 12+ years studying digital health I can't describe what a difference this would make to CHW<>patient interactions. Already far too many CHWs are completely overburdened filling out paper forms, digital forms, and whatever latest sexy-but-underbaked digital tool is in vogue. We once did a time study in Ghana and watched a vaccinator spend 20 minutes delivering a vaccine, of which 18 minutes were spent on manual and digital paperwork. While the hurdles ahead are huge, the rewards could be more so if we navigate these changes well.

Thanks Rubayat for this compiled AI breakthroughs. Generative AI addresses one of the major challenge we have been seeing in AI, especially deep learning algorithms being called black boxes. I spent last year of my PhD in the applications of AI in healthcare just to explain how deep learning models make their decision. I argued a lot that these algorithms can be explained the same way a human doctor does as they mimic the working principles of human brain. Now we are seeing GenAI generating medical reports from their analysis.